TikTok found a way to make the fog of war shoppable

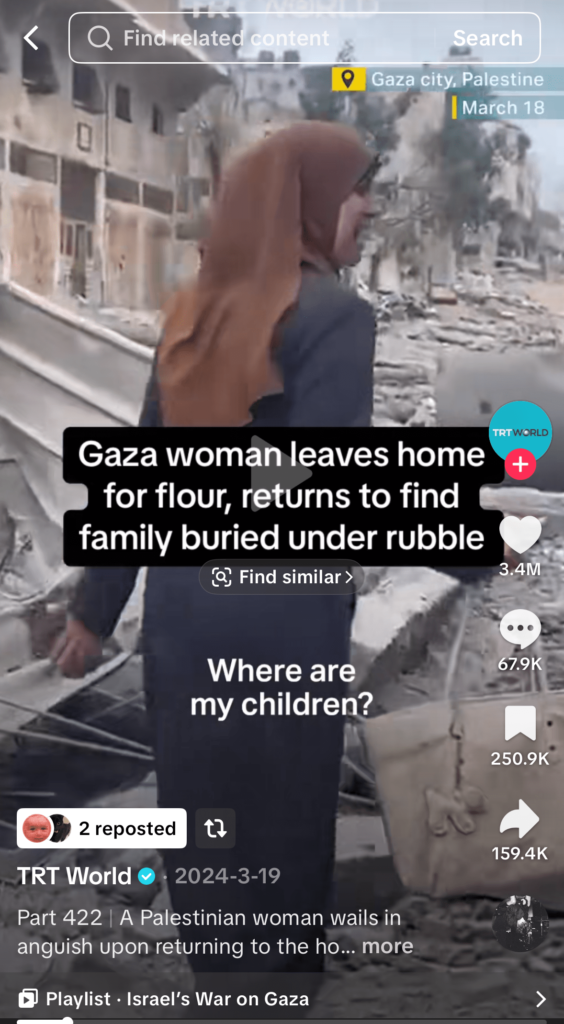

A Palestinian woman walks through rubble, crying for her missing family. Pause the clip and TikTok helpfully overlays a little button: “Find Similar.” Tap it, and the app goes hunting for visually similar videos—and, because this is TikTok in 2025, it also suggests “dupes” on TikTok Shop that look like the clothing she’s wearing. Welcome to war zones as mood board, and trauma as a look.

That’s not a hypothetical. The Verge flagged a video from Turkish broadcaster TRT World where this exact sequence plays out, and the result is every bit as dystopian as it sounds. TikTok’s computer vision saw fabric, not context; a silhouette, not suffering. The platform fused discovery and commerce so tightly that the algorithm can’t tell the difference between an outfit-of-the-day and a home that’s just been leveled.

To understand how we got here, you need to understand how TikTok has quietly turned everything into a shopping surface. The “Find Similar” prompt is a visual-search affordance: freeze a frame, and TikTok pulls up clips that look alike. With Shop welded into the core experience, that same intent can spill straight into product suggestions. It’s frictionless—by design. The model identifies garments, patterns, accessories, and colors at the frame level. If it looks like a dress, you get dresses. If it looks like a keffiyeh, you get keffiyeh-ish scarves. If a seller has the right metadata, price, and inventory attached, they’re in the carousel—no creator tag required.

Technically, it’s clever. But morally? It’s vacant.

You can see the incentive structure from space. TikTok has over a billion users and has been pushing Shop as the next big growth engine, subsidizing sellers and flooding the feed with buy flows. Visual search that seamlessly routes to commerce is the dream if you’re selling a blouse. It’s a nightmare when the blouse is worn by someone searching for her children in a building that just collapsed. The problem isn’t that computer vision is “bad”; it’s that context-blind recognition is doing exactly what it’s told, without any meaningful guardrails about where that recognition should not kick in.

This is an old tech problem wearing new clothes. Platforms have spent a decade learning that ad adjacency matters. YouTube built brand-safety controls after advertisers revolted about pre-rolls next to extremist content. News publishers flag “hard news” to avoid certain programmatic categories. Even Instagram, which also leans hard into shopping, tends to constrain product overlays to posts the creator explicitly tags or to clear merchant surfaces. TikTok, by contrast, is letting a system-level classifier throw shopping prompts on basically anything that looks merch-able in a paused frame—in the middle of fucking crisis footage.

Could TikTok avoid this? Of course. They already run large-scale classifiers to demote or label graphic violence, war, and “sensitive” content. They know when a video has captions about Gaza, when the audio references an airstrike, when the publisher is a news broadcaster, or when a clip is trending under conflict-related hashtags. Flip a switch and suppress Shop prompts on that inventory. If you want to be extra safe, suppress on all news flags, NGO accounts, and a target list of crisis events. This isn’t rocket science; it’s product policy.

What makes this extra gross is that creators don’t need to opt in. Nobody tagged a blouse for sale in that TRT World video. The platform did it anyway. That means sellers get traffic off tragedy without intent, viewers get whiplash, and TikTok gets to test whether moral disgust still converts at 3 percent. If it does, great for the dashboard; if it doesn’t, well, at least the experiment shipped.

And yes, before the “but this is just how algorithms work” chorus warms up: that’s the point. When you wire growth, engagement, and commerce into the same loop, you’d better think very carefully about the outer bounds of where those loops fire. A system that treats a bombed-out apartment like a street-style shot isn’t misfiring; it’s following orders in a world where no one bothered to write down, “do not put buy buttons on war.”

There’s also a reputational cost TikTok should care about if the ethics case isn’t getting through. TikTok Shop is already a magnet for counterfeiters, questionable supplements, and two-dollar mystery gadgets with twelve-dollar shipping. It doesn’t need “Gaza dupes” as a brand pillar. Regulators might not love it either. Commerce adjacency to crisis content is the sort of thing a bored committee staffer can turn into a hearing overnight, and TikTok does not have political goodwill to burn in the US.

Zoom out and this isn’t only a TikTok story. Google has tested product detection in YouTube. Meta has been chasing shoppable video since 2019, with varying success and plenty of cringe. Everyone wants to erase the line between discovery and checkout. The difference between “this is smart” and “this is unthinkable” is one architectural decision: should commerce overlays be default-on for everything a model can detect, or opt-in for content with explicit shopping intent? TikTok picked “default on.” The Verge’s reporting shows the exact kind of failure that choice guarantees.

What should happen next is boring and necessary. Turn off “Find Similar” product carousels on news, crisis, and war content—globally. Tie any shoppable overlay to explicit creator intent or to verified merchant posts. Build a “no commerce” label into the publisher workflow for broadcasters and NGOs. Let users globally disable product suggestions from visual search. And while you’re in there, add crisis keyword and entity filters to the Shop graph so sellers don’t get to piggyback on atrocity hashtags for ranking juice.

Will TikTok do this? If past is prologue, they’ll quietly tweak it, claim it only affected a “small subset” of videos, and tell us they take safety very seriously. Maybe they’ll even launch an initiative to donate Shop fees from news-adjacent inventory to relief funds for a month, like a corporate conscience coupon. Fine. But the real fix is system-level: stop letting the buy button wander into places it doesn’t belong.

There’s an old rule in advertising: context is content. On TikTok, context is apparently optional as long as you can ship a conversion. If the platform wants to be a marketplace and a media company at the same time, it needs to inherit the responsibilities of both. That starts with a line even an algorithm can understand: don’t dress up tragedy and send it to checkout.

No Comments